|

To test this system, the researchers turned to what's called an Ising model, which is easiest to think of as a grid of electrons where each electron's spin influences that of its neighbors. That function can then have its noise set to zero to produce an estimate of what the processor would do without any noise at all. These measurements are used to estimate a function that produces similar output as the actual measurements.

So instead, the researchers turned to a method where they intentionally amplified and then measured the processor's noise at different levels. But as the number of qubits involved in the calculation goes up, this method gets a bit impractical to use-you have to do too much sampling. It's a means of measuring the typical errors, compensating for them after the fact and producing an estimate of the real result that's hidden within the noise.Īn early method of performing error mitigation (termed probabilistic error cancellation) involved sampling the behavior of the quantum processor to develop a model of the typical noise and then subtracting the noise from the measured output of an actual calculation. If we think of quantum error correction as a way to avoid the noise that keeps qubits from accurately performing operations, error mitigation can be viewed as accepting that the noise is inevitable. And they did so in a way that clearly outperformed similar calculations on classical computers. Using a technique termed "error mitigation," they managed to overcome the problems with today's qubits and produce an accurate result despite the noise in the system.

In a publication in today's Nature, IBM researchers make a strong case for the answer to that being yes. Given that we probably won't reach that point until the next decade at the earliest, it raises the question of whether quantum computers can do anything interesting in the meantime.

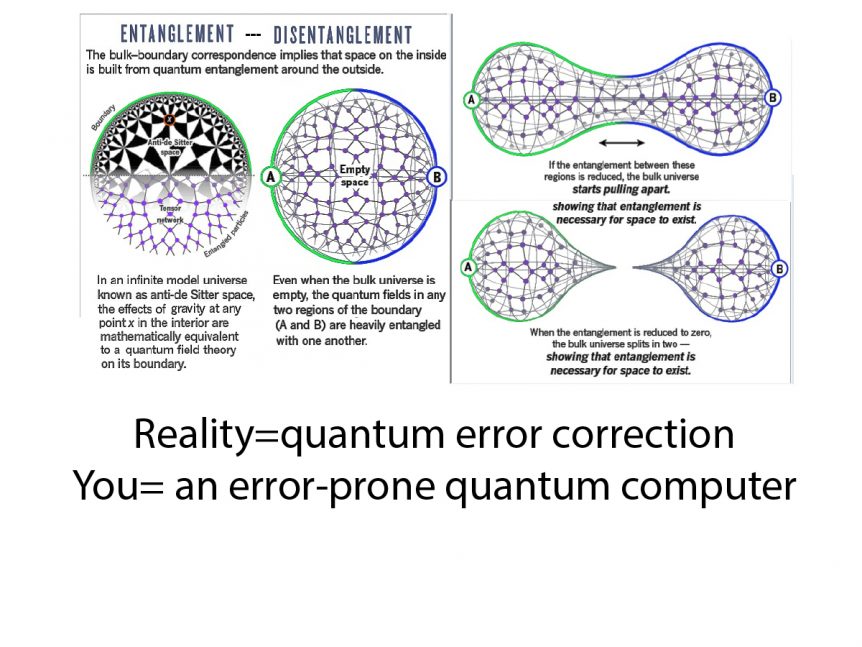

But these will require multiple high-quality qubits for every bit of information, meaning we'll need thousands of qubits that are better than anything we can currently make. Long term, the plan is to solve that using error-corrected qubits. If we try an operation that needs a lot of qubits, or a lot of operations on a smaller number of qubits, then errors become inevitable. While the probabilities are small-less than 1 percent in many cases-each operation we perform on each qubit, including basic things like reading its state, has a significant error rate. Today's quantum processors are error-prone.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed